How to deploy a sample Python Application to a Managed EKS Environment in AWS

A step by step guide

Table of contents

- Overview

- Source Code

- Setup your virtual environment

- Generate requirements.txt

- Prepare Docker Image

- Image Security

- IRSA (IAM Role for Service Accounts)

- Deploying and running the application

- Port forwarding to test the application locally

- CloudTrail to verify use of the IRSA Role

- Github workflow to build and push the docker image

- Final thoughts

Overview

We previously looked at deploying managed EKS Cluster and Node Group on AWS. This blog post assumes that you have a bootstrapped Kubernetes environment, where you can follow along and deploy a sample application to.

I was following a few tutorials on EKS from EKS Workshop and came across Sample Go Webserver App written by Michael S. Fischer. The webserver application is written in Golang but I decided to rewrite the same application in Python 3.

This simple app renders a homepage with useful information about,

- The location of the pod inside the cluster - instance id, availability zone

- Client information - ip address

- Pod information - namespace, name

- Application name

Source Code

The source code for this application can be found here.

Clone the repository,

git clone https://github.com/boltdynamics/eks-app

Configuration file

Naming for resources is based off the configuration file defaults.conf stored under settings directory.

Makefile uses the values defined in the configuration file to create the docker image, IAM Role for service accounts, kubernetes namespace and deployment

Change the values in the configuration file as needed before running make commands.

Setup your virtual environment

Python version is use is 3.9 and is set in the Pipfile.

Run make install to install the dependencies and setup your virtual environment. These dependencies are,

Generate requirements.txt

Run make generate-requirements to generate a requirements.txt file which records an environment's current package list into the file.

Prepare Docker Image

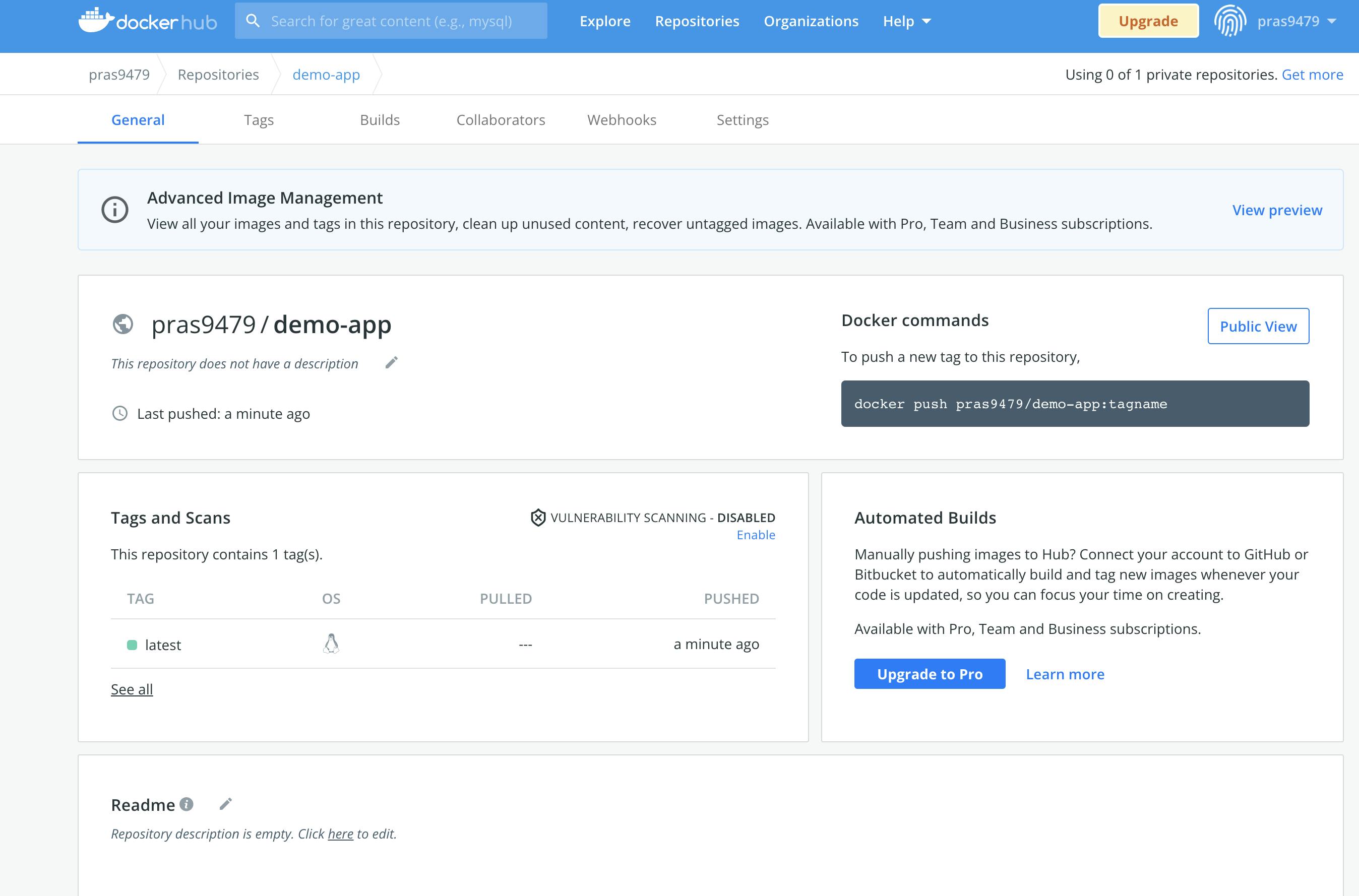

To keep things simple, we will build a docker image based on the Flask app and push it to DockerHub as a public image so we can use it from anywhere for testing and deployments.

Run make build-eks-app to build the docker image.

Docker image is built upon a base python:alpine3.15 image to keep the image small.

The easiest way to deploy and test the image is to push it to Dockerhub with your credentials or token. Update your docker username under settings/defaults.conf configuration file.

Run make push-app-to-docker-hub to push the image to Dockerhub.

Image Security

In the docker image, user is set to 1001 and the exposed port on the container is 5000.

runAsNonRoot set to true to avoid giving containers access to host resources backed by allowPrivilegeEscalation set to false in the deployment spec hosted under kubernetes/app.yaml. Similarly, readOnlyRootFilesystem is also set to true.

IRSA (IAM Role for Service Accounts)

IAM Role for Service Accounts (IRSA) allows us to to scope the permissions of a service account to the IAM Role we create for any interactions with AWS.

Any container running in a pod assigned to the service account will have access to AWS Resources with limited permissions defined in the IAM Role. This allows us to follow the principle of least privilege.

For this example, we will create a IAM Role with the following permissions(file can be found at cloudformation/irsa-role.yaml):

- ec2:DescribeNetworkInterfaces

We add the IAM role as an annotation to the kubernetes service account and we store the role's ARN as a SSM parameter so we can use it for discovery when needed.

Run make deploy-irsa-role to deploy an IAM Role to AWS.

Deploying and running the application

Running the docker app locally might not be the best option as the application code is designed to work within a Kubernetes environment.

Run make deploy-eks-app to deploy,

- A namespace for the application

- A service account for the application

- The service account has the IAM role annotation set to the IRSA Role we created earlier

- A deployment of the application with 2 replicas

However, it is possible to run the app locally with make run-eks-app command with some unexpected results.

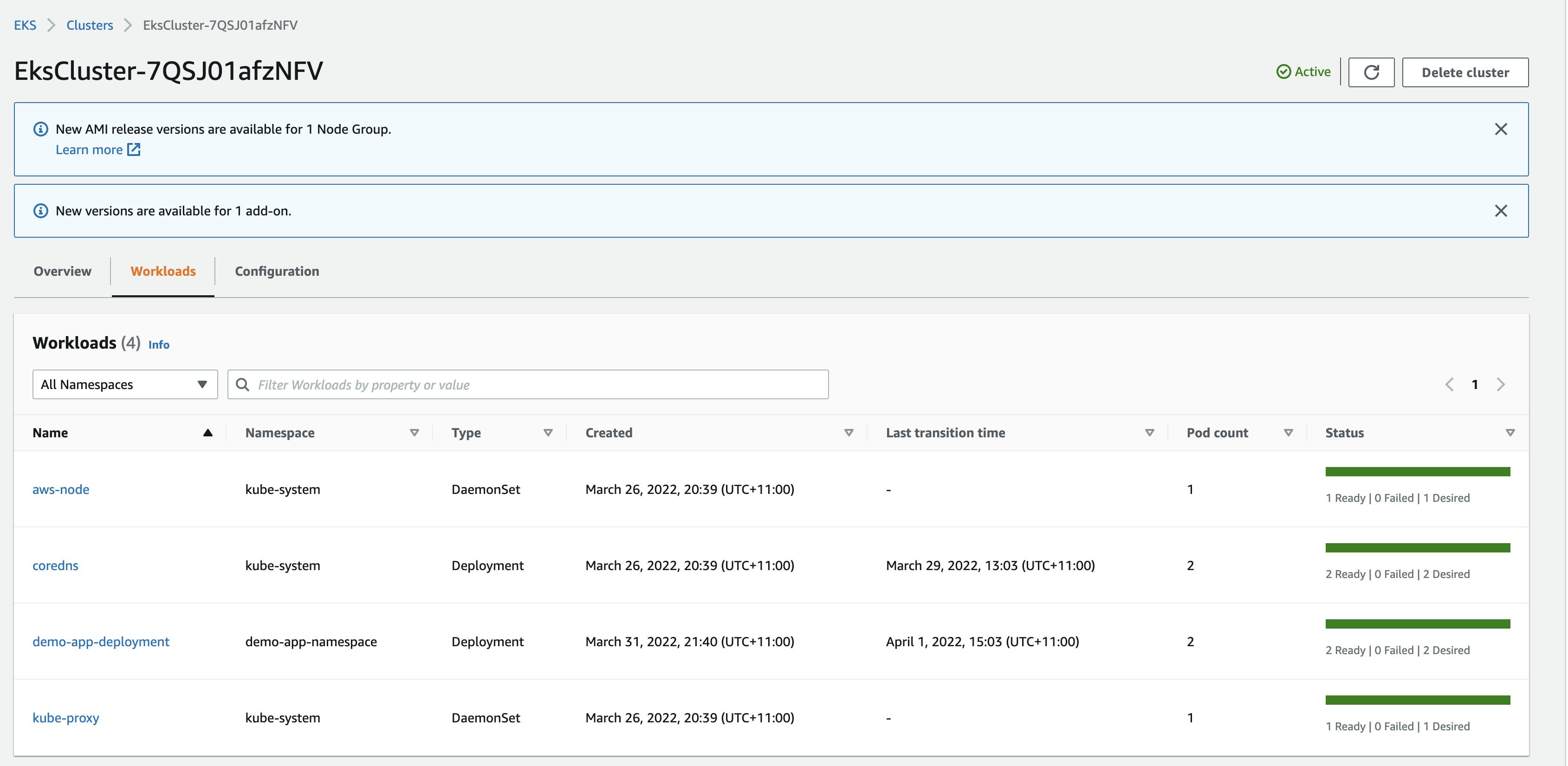

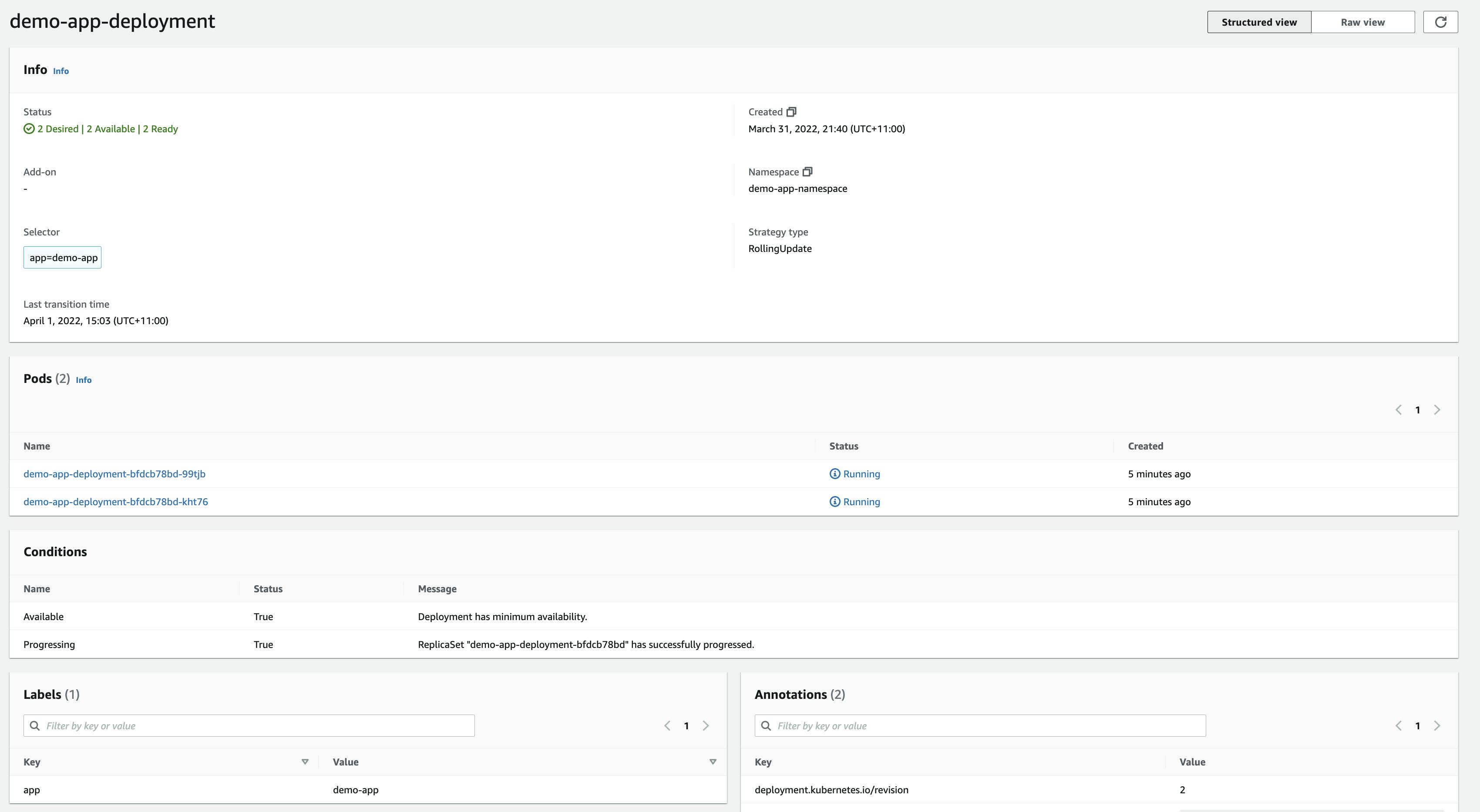

We can verify from the EKS Console that the deployment was successful,

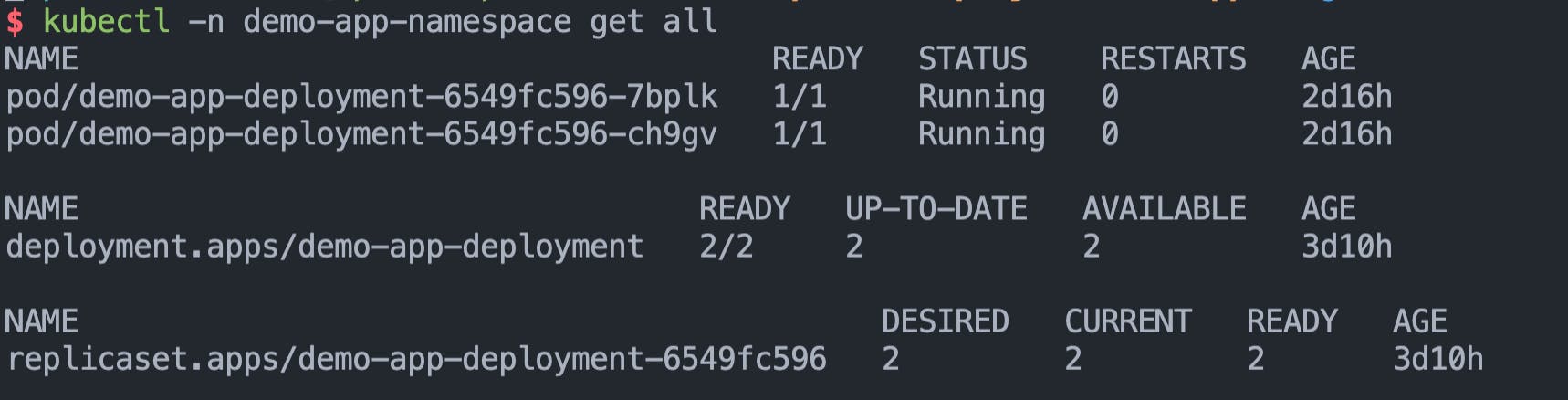

Check the resources deployed in the app namespace using kubectl,

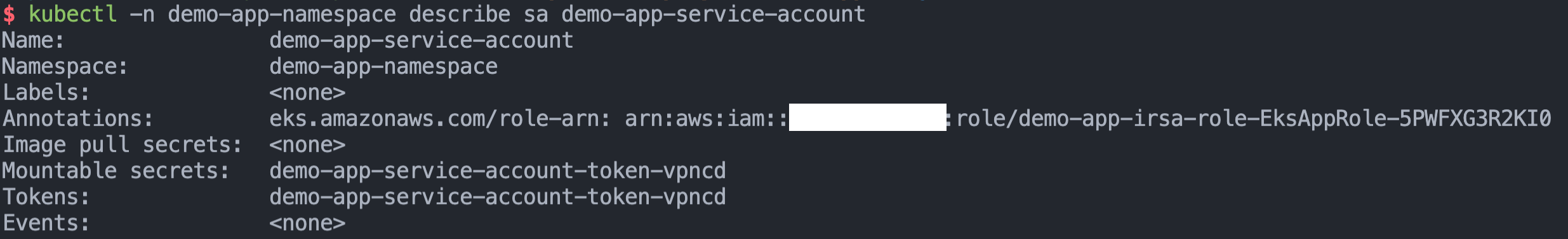

Also describe the service account to verify that we see the IAM Role's annotation,

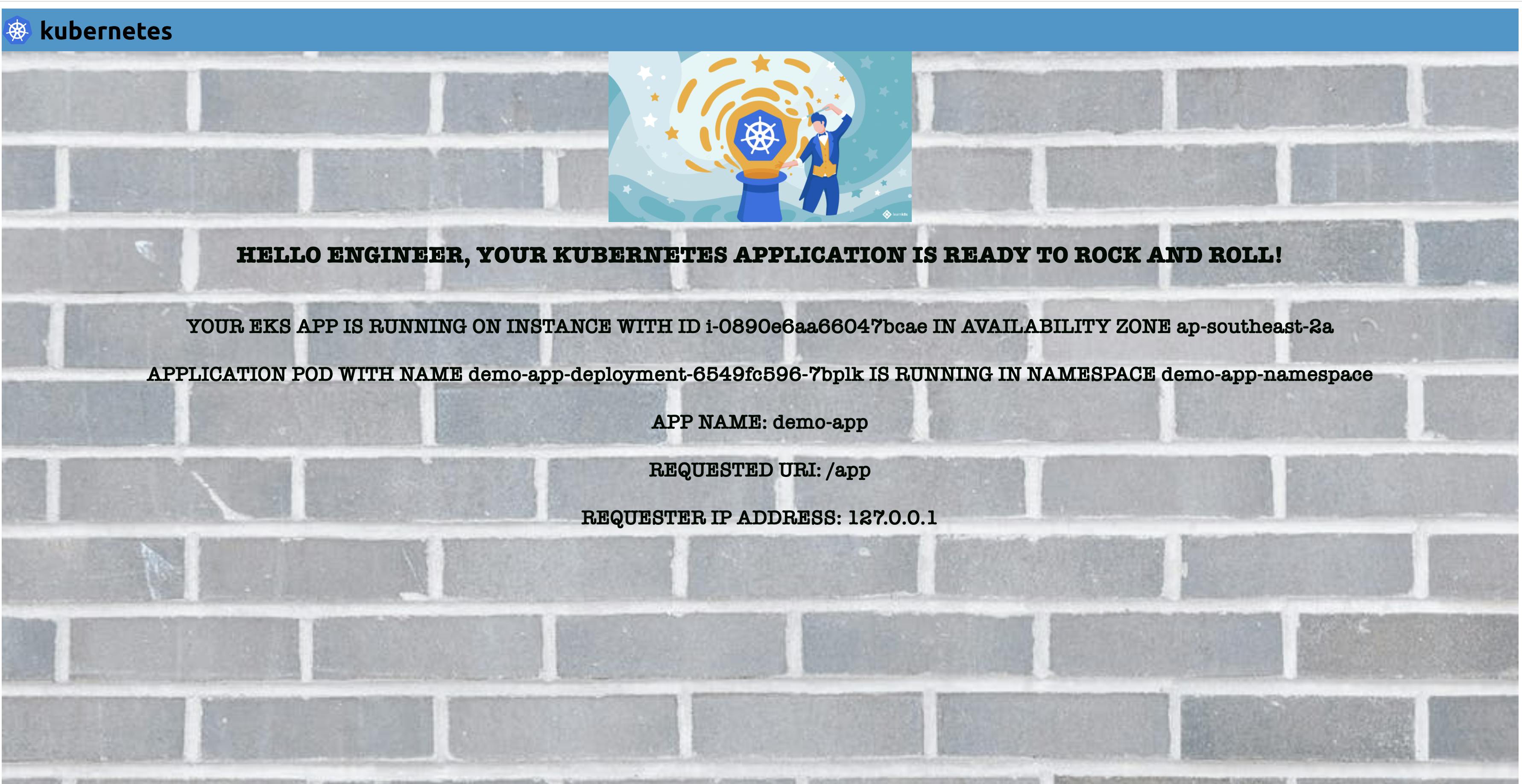

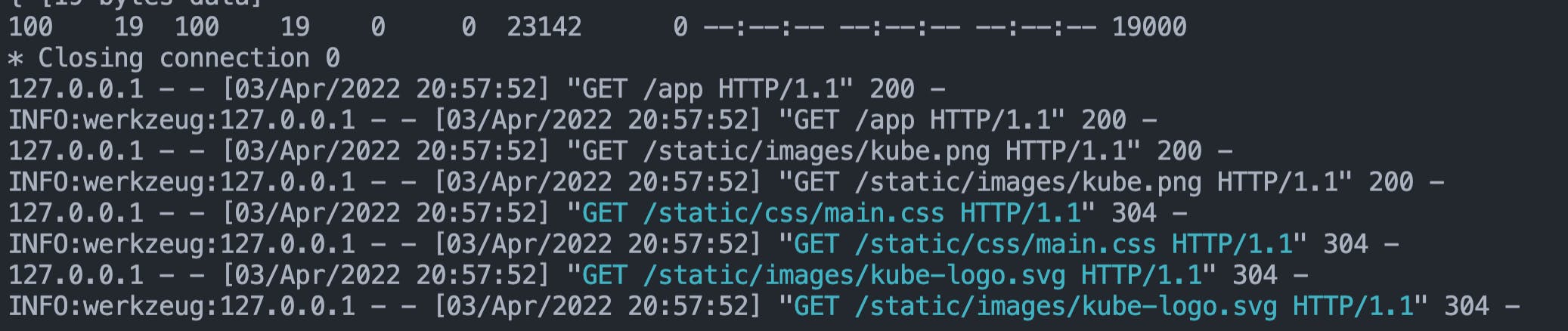

Port forwarding to test the application locally

To test if the application is working as expected in our EKS environment, we can use the kubectl port-forward command to forward the port from the pod to our localhost and try out the application locally.

Run make port-forward-eks-app to portforward eks app running on port 5000 to localhost port 80.

This is great, we can are now able to verify that the application works by testing it locally 🎉

As mentioned before, the application reflects the pod and namespace information , Availability Zone, client IP and instance ID; which can be super helpful when learning Kubernetes.

Let's check the application logs,

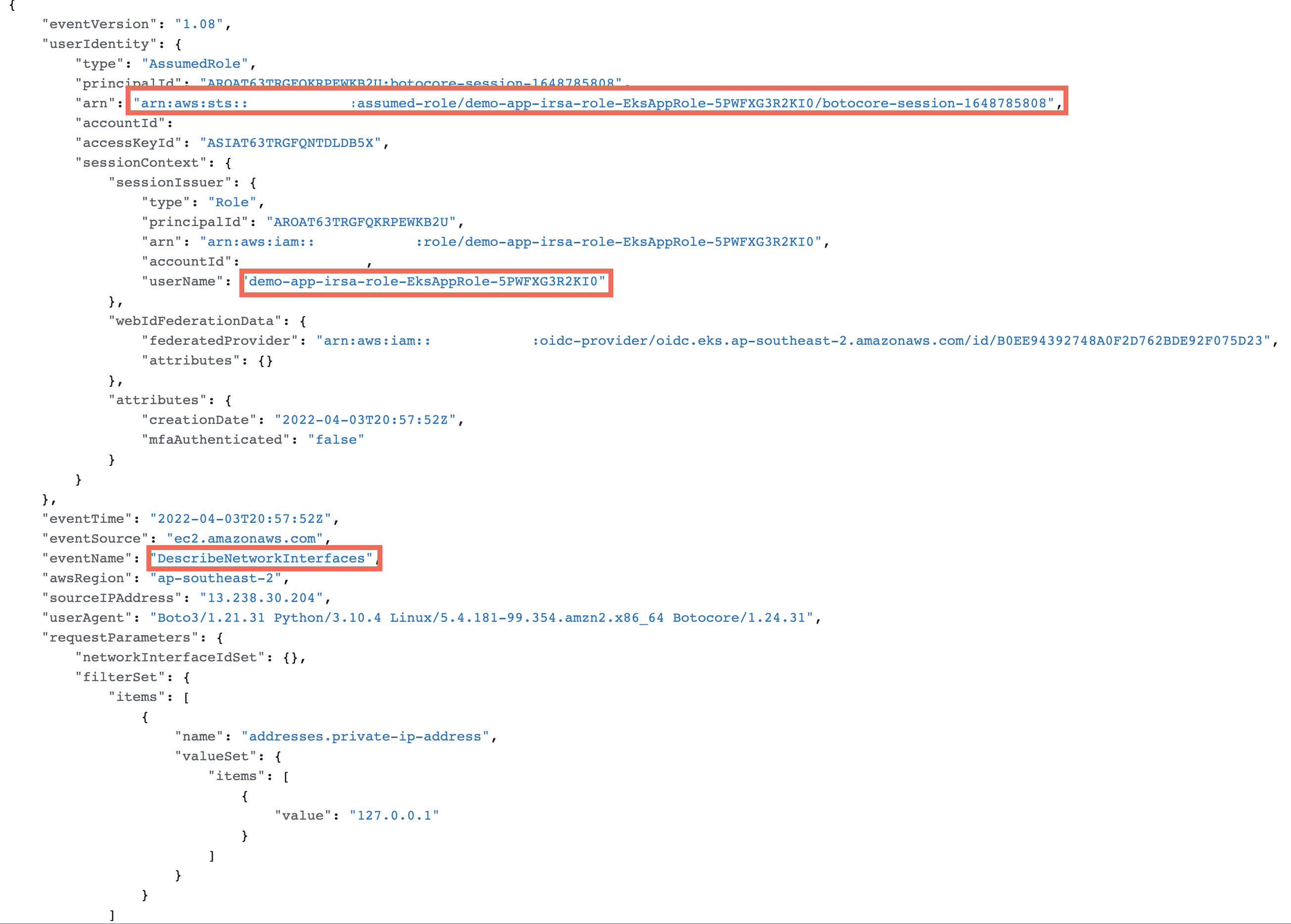

CloudTrail to verify use of the IRSA Role

AWS CloudTrail is an AWS service that tracks usage of the AWS services by users, an IAM role or other services to provide a holistic view of operations taking place in our AWS Accounts. This data helps in governance, auditing and compliance requirements. Events include actions taken in the AWS Management Console, AWS Command Line Interface, and AWS SDKs and APIs.

We can use CloudTrial to search by EventName and see if our application container has assumed the IRSA Role to make the ec2:DescribeNetworkInterfaces API call.

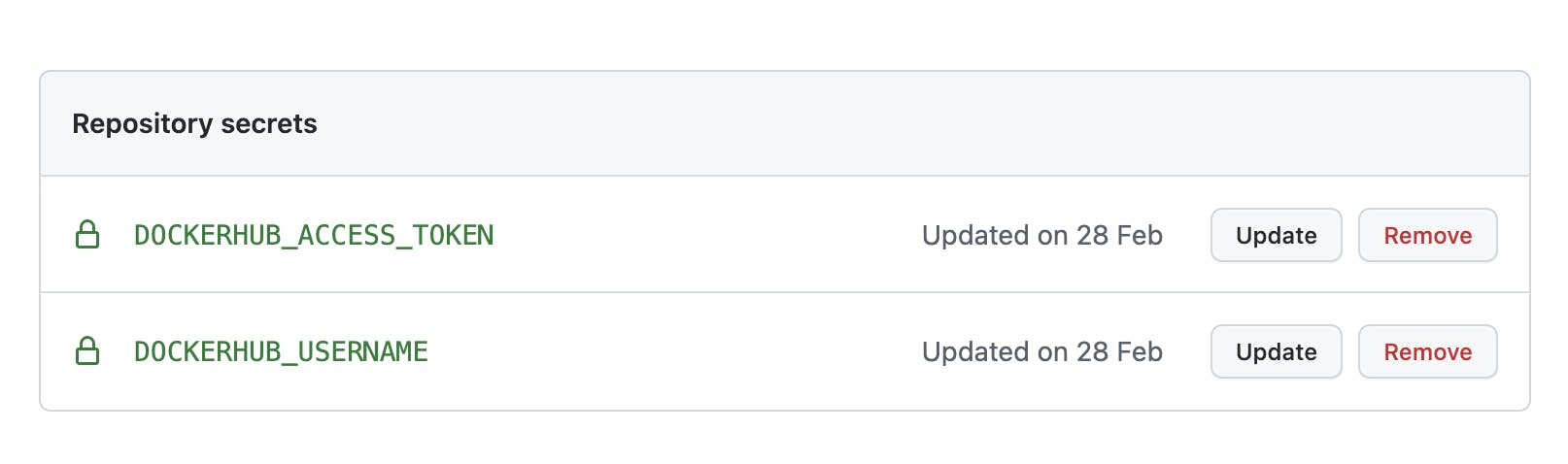

Github workflow to build and push the docker image

Everytime we merge a Pull Request to the mainline branch, we want to be able to automatically build the docker image and push it to Dockerhub. This follows the principle of Continuous Integration(CI) and Continuous Delivery(CD).

First, we need to create a Personal Access Token(PAT) for Dockerhub. Follow this guide from DockerHub to create one for your account. Store the PAT and your username as GitHub secrets as shown below,

You will notice that the secret names are referenced in the GitHub workflow file stored under path .github/workflows/build-eks-app.yaml.

Everytime we merge a Pull Request to the mainline branch, a github workflow will start and it will build and push the docker image to Dockerhub.

Final thoughts

You have made it to the end of the blog post, thank you for reading it all the way 😎

I hope you enjoyed this blog post on how to deploy a sample application to AWS EKS.

In the next blog post, we will learn how to deploy an ingress controller to be able to connect to the application over the internet. An ingress controller will allow us to specify a custom domain to connect to the application using a Load Balancer deployed in AWS. We will see how kubernetes ingress and service objects tie together to make an application available inside and outside the cluster.